About the CoMSES Model Library more info

Our mission is to help computational modelers develop, document, and share their computational models in accordance with community standards and good open science and software engineering practices. Model authors can publish their model source code in the Computational Model Library with narrative documentation as well as metadata that supports open science and emerging norms that facilitate software citation, computational reproducibility / frictionless reuse, and interoperability. Model authors can also request private peer review of their computational models. Models that pass peer review receive a DOI once published.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and feel free to contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 10 of 189 results for "Ed Manley" clear search

Peer reviewed FISHCODE - FIsheries Simulation with Human COmplex DEcision-making

Birgit Müller Gunnar Dressler Jonas Letschert Christian Möllmann Vanessa Stelzenmüller | Published Monday, August 05, 2024FIsheries Simulation with Human COmplex DEcision-making (FISHCODE) is an agent-based model to depict and analyze current and future spatio-temporal dynamics of three German fishing fleets in the southern North Sea. Every agent (fishing vessel) makes daily decisions about if, what, and how long to fish. Weather, fuel and fish prices, as well as the actions of their colleagues influence agents’ decisions. To combine behavioral theories and enable agents to make dynamic decision, we implemented the Consumat approach, a framework in which agents’ decisions vary in complexity and social engagement depending on their satisfaction and uncertainty. Every agent has three satisfactions and two uncertainties representing different behavioral aspects, i.e. habitual behavior, profit maximization, competition, conformism, and planning insecurity. Availability of extensive information on fishing trips allowed us to parameterize many model parameters directly from data, while others were calibrated using pattern oriented modelling. Model validation showed that spatial and temporal aggregated ABM outputs were in realistic ranges when compared to observed data. Our ABM hence represents a tool to assess the impact of the ever growing challenges to North Sea fisheries and provides insight into fisher behavior beyond profit maximization.

A simple emulation-based computational model

Carlos M Fernández-Márquez Francisco J Vázquez | Published Tuesday, May 21, 2013 | Last modified Tuesday, February 05, 2019Emulation is one of the simplest and most common mechanisms of social interaction. In this paper we introduce a descriptive computational model that attempts to capture the underlying dynamics of social processes led by emulation.

A Model of Making

Bruce Edmonds | Published Friday, January 29, 2016 | Last modified Wednesday, December 07, 2016This models provides the infrastructure to model the activity of making. Individuals use resources they find in their environment plus those they buy, to design, construct and deconstruct items. It represents plans and complex objects explicitly.

Crowdworking Model

Georg Jäger | Published Wednesday, September 25, 2019The purpose of this agent-based model is to compare different variants of crowdworking in a general way, so that the obtained results are independent of specific details of the crowdworking platform. It features many adjustable parameters that can be used to calibrate the model to empirical data, but also when not calibrated it yields essential results about crowdworking in general.

Agents compete for contracts on a virtual crowdworking platform. Each agent is defined by various properties like qualification and income expectation. Agents that are unable to turn a profit have a chance to quit the crowdworking platform and new crowdworkers can replace them. Thus the model has features of an evolutionary process, filtering out the ill suited agents, and generating a realistic distribution of agents from an initially random one. To simulate a stable system, the amount of contracts issued per day can be set constant, as well as the number of crowdworkers. If one is interested in a dynamically changing platform, the simulation can also be initialized in a way that increases or decreases the number of crowdworkers or number of contracts over time. Thus, a large variety of scenarios can be investigated.

Individual-based modelling as a tool for elephant poaching mitigation

Ernesto Carrella Richard Bailey Emily Neil Jens Koed Madsen Nicolas Payette | Published Tuesday, June 18, 2019 | Last modified Thursday, August 01, 2019We develop an IBM that predicts how interactions between elephants, poachers, and law enforcement affect poaching levels within a virtual protected area. The model is theoretical at this stage and is not meant to provide a realistic depiction of poaching, but instead to demonstrate how IBMs can expand upon the existing modelling work done in this field, and to provide a framework for future research. The model could be further developed into a useful management support tool to predict the outcomes of various poaching mitigation strategies at real-world locations. The model was implemented in NetLogo version 6.1.0.

We first compared a scenario in which poachers have prescribed, non-adaptive decision-making and move randomly across the landscape, to one in which poachers adaptively respond to their memories of elephant locations and where other poachers have been caught by law enforcement. We then compare a situation in which ranger effort is distributed unevenly across the protected area to one in which rangers patrol by adaptively following elephant matriarchal herds.

06 EiLab V1.36 – Entropic Index Laboratory

Garvin Boyle | Published Saturday, January 31, 2015 | Last modified Friday, April 14, 2017EiLab explores the role of entropy in simple economic models. EiLab is one of several models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, and CmLab.

01b ModEco NLG V1.39 – Model Economies – The PMM

Garvin Boyle | Published Friday, April 14, 2017It is very difficult to model a sustainable intergenerational biophysical/financial economy. ModEco NLG is one of a series of models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, CmLab.

06 EiLab V1.40 – Entropic Index Laboratory

Garvin Boyle | Published Monday, March 19, 2018There is a new type of economic model called a capital exchange model, in which the biophysical economy is abstracted away, and the interaction of units of money is studied. Benatti, Drăgulescu and Yakovenko described at least eight capital exchange models – now referred to collectively as the BDY models – which are replicated as models A through H in EiLab. In recent writings, Yakovenko goes on to show that the entropy of these monetarily isolated systems rises to a maximal possible value as the model approaches steady state, and remains there, in analogy of the 2nd law of thermodynamics. EiLab demonstrates this behaviour. However, it must be noted that we are NOT talking about thermodynamic entropy. Heat is not being modeled – only simple exchanges of cash. But the same statistical formulae apply.

In three unpublished papers and a collection of diary notes and conference presentations (all available with this model), the concept of “entropic index” is defined for use in agent-based models (ABMs), with a particular interest in sustainable economics. Models I and J of EiLab are variations of the BDY model especially designed to study the Maximum Entropy Principle (MEP – model I) and the Maximum Entropy Production Principle (MEPP – model J) in ABMs. Both the MEPP and H.T. Odum’s Maximum Power Principle (MPP) have been proposed as organizing principles for complex adaptive systems. The MEPP and the MPP are two sides of the same coin, and an understanding of their implications is key, I believe, to understanding economic sustainability. Both of these proposed (and not widely accepted) principles describe the role of entropy in non-isolated systems in which complexity is generated and flourishes, such as ecosystems, and economies.

EiLab is one of several models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, and CmLab.

TunaFisher ABM

Guus Ten Broeke | Published Wednesday, January 13, 2021TunaFisher ABM simulates the decisions of fishing companies and fishing vessels of the Philippine tuna purse seinery operating in the Celebes and Sulu Seas.

High fishing effort remains in many of the world’s fisheries, including the Philippine tuna purse seinery, despite a variety of policies that have been implemented to reduce it. These policies have predominantly focused on models of cause and effect which ignore the possibility that the intended outcomes are altered by social behavior of autonomous agents at lower scales.

This model is a spatially explicit Agent-based Model (ABM) for the Philippine tuna purse seine fishery, specifically designed to include social behavior and to study its effects on fishing effort, fish stock and industry profit. The model includes economic and social factors of decision making by companies and fishing vessels that have been informed by interviews.

…

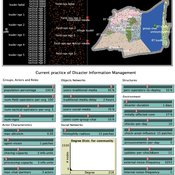

Peer reviewed Share: bottom-up disaster information management

Vittorio Nespeca Tina Comes Frances Brazier | Published Monday, December 05, 2022This model is intended to study the way information is collectively managed (i.e. shared, collected, processed, and stored) in a system and how it performs during a crisis or disaster. Performance is assessed in terms of the system’s ability to provide the information needed to the actors who need it when they need it. There are two main types of actors in the simulation, namely communities and professional responders. Their ability to exchange information is crucial to improve the system’s performance as each of them has direct access to only part of the information they need.

In a nutshell, the following occurs during a simulation. Due to a disaster, a series of randomly occurring disruptive events takes place. The actors in the simulation need to keep track of such events. Specifically, each event generates information needs for the different actors, which increases the information gaps (i.e. the “piles” of unaddressed information needs). In order to reduce the information gaps, the actors need to “discover” the pieces of information they need. The desired behavior or performance of the system is to keep the information gaps as low as possible, which is to address as many information needs as possible as they occur.

Displaying 10 of 189 results for "Ed Manley" clear search