About the CoMSES Model Library more info

Our mission is to help computational modelers at all levels engage in the establishment and adoption of community standards and good practices for developing and sharing computational models. Model authors can freely publish their model source code in the Computational Model Library alongside narrative documentation, open science metadata, and other emerging open science norms that facilitate software citation, reproducibility, interoperability, and reuse. Model authors can also request peer review of their computational models to receive a DOI.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with additional detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 4 of 4 results maximum entropy production principle clear search

06 EiLab V1.40 – Entropic Index Laboratory

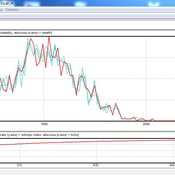

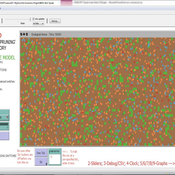

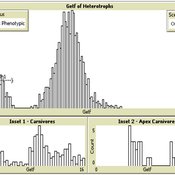

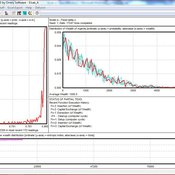

Garvin Boyle | Published Monday, March 19, 2018There is a new type of economic model called a capital exchange model, in which the biophysical economy is abstracted away, and the interaction of units of money is studied. Benatti, Drăgulescu and Yakovenko described at least eight capital exchange models – now referred to collectively as the BDY models – which are replicated as models A through H in EiLab. In recent writings, Yakovenko goes on to show that the entropy of these monetarily isolated systems rises to a maximal possible value as the model approaches steady state, and remains there, in analogy of the 2nd law of thermodynamics. EiLab demonstrates this behaviour. However, it must be noted that we are NOT talking about thermodynamic entropy. Heat is not being modeled – only simple exchanges of cash. But the same statistical formulae apply.

In three unpublished papers and a collection of diary notes and conference presentations (all available with this model), the concept of “entropic index” is defined for use in agent-based models (ABMs), with a particular interest in sustainable economics. Models I and J of EiLab are variations of the BDY model especially designed to study the Maximum Entropy Principle (MEP – model I) and the Maximum Entropy Production Principle (MEPP – model J) in ABMs. Both the MEPP and H.T. Odum’s Maximum Power Principle (MPP) have been proposed as organizing principles for complex adaptive systems. The MEPP and the MPP are two sides of the same coin, and an understanding of their implications is key, I believe, to understanding economic sustainability. Both of these proposed (and not widely accepted) principles describe the role of entropy in non-isolated systems in which complexity is generated and flourishes, such as ecosystems, and economies.

EiLab is one of several models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, and CmLab.

04 TpLab V2.08 – Teleological Pruning Laboratory

Garvin Boyle | Published Saturday, April 15, 2017Our societal belief systems are pruned by evolution, informing our unsustainable economies. This is one of a series of models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, CmLab.

03 MppLab V1.09 – Maximum Power Principle Laboratory

Garvin Boyle | Published Saturday, April 15, 2017Using webs of replicas of Atwood’s Machine, we explore implications of the Maximum Power Principle. This is one of a series of models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, CmLab.

06 EiLab V1.36 – Entropic Index Laboratory

Garvin Boyle | Published Saturday, January 31, 2015 | Last modified Friday, April 14, 2017EiLab explores the role of entropy in simple economic models. EiLab is one of several models exploring the dynamics of sustainable economics – PSoup, ModEco, EiLab, OamLab, MppLab, TpLab, and CmLab.