About the CoMSES Model Library more info

Our mission is to help computational modelers develop, document, and share their computational models in accordance with community standards and good open science and software engineering practices. Model authors can publish their model source code in the Computational Model Library with narrative documentation as well as metadata that supports open science and emerging norms that facilitate software citation, computational reproducibility / frictionless reuse, and interoperability. Model authors can also request private peer review of their computational models. Models that pass peer review receive a DOI once published.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and feel free to contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 10 of 1134 results for "J A Cuesta" clear search

Peer reviewed Dynamic Value-based Cognitive Architectures

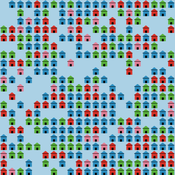

Bart de Bruin | Published Tuesday, November 30, 2021The intention of this model is to create an universal basis on how to model change in value prioritizations within social simulation. This model illustrates the designing of heterogeneous populations within agent-based social simulations by equipping agents with Dynamic Value-based Cognitive Architectures (DVCA-model). The DVCA-model uses the psychological theories on values by Schwartz (2012) and character traits by McCrae and Costa (2008) to create an unique trait- and value prioritization system for each individual. Furthermore, the DVCA-model simulates the impact of both social persuasion and life-events (e.g. information, experience) on the value systems of individuals by introducing the innovative concept of perception thermometers. Perception thermometers, controlled by the character traits, operate as buffers between the internal value prioritizations of agents and their external interactions. By introducing the concept of perception thermometers, the DVCA-model allows to study the dynamics of individual value prioritizations under a variety of internal and external perturbations over extensive time periods. Possible applications are the use of the DVCA-model within artificial sociality, opinion dynamics, social learning modelling, behavior selection algorithms and social-economic modelling.

ForagerNet3_Demography_V2

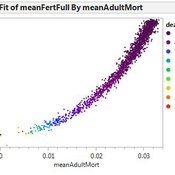

Andrew White | Published Thursday, February 13, 2014ForagerNet3_Demography_V2 is a non-spatial ABM for exploring hunter-gatherer demography. This version (developed from FN3D_V1) contains code for calculating the ratio of old to young adults (the “OY ratio”) in the living and dead populations.

Spatio-Temporal Dynamic of Risk Model

J Jumadi | Published Tuesday, October 22, 2019 | Last modified Sunday, January 05, 2020This model aims to simlulate the dynamic of risk over time and space.

ForagerNet3_Demography: A Non-Spatial Model of Hunter-Gatherer Demography

Andrew White | Published Thursday, October 17, 2013 | Last modified Thursday, October 17, 2013ForagerNet3_Demography is a non-spatial ABM for exploring hunter-gatherer demography. Key methods represent birth, death, and marriage. The dependency ratio is an imporant variable in many economic decisions embedded in the methods.

Peer reviewed General Housing Model

J M Applegate | Published Thursday, May 07, 2020The General Housing Model demonstrates a basic housing market with bank lending, renters, owners and landlords. This model was developed as a base to which students contributed additional functions during Arizona State University’s 2020 Winter School: Agent-Based Modeling of Social-Ecological Systems.

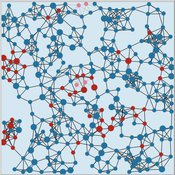

Peer reviewed Virus Transmission with Super-spreaders

J M Applegate | Published Saturday, September 11, 2021A curious aspect of the Covid-19 pandemic is the clustering of outbreaks. Evidence suggests that 80\% of people who contract the virus are infected by only 19% of infected individuals, and that the majority of infected individuals faile to infect another person. Thus, the dispersion of a contagion, $k$, may be of more use in understanding the spread of Covid-19 than the reproduction number, R0.

The Virus Transmission with Super-spreaders model, written in NetLogo, is an adaptation of the canonical Virus Transmission on a Network model and allows the exploration of various mitigation protocols such as testing and quarantines with both homogenous transmission and heterogenous transmission.

The model consists of a population of individuals arranged in a network, where both population and network degree are tunable. At the start of the simulation, a subset of the population is initially infected. As the model runs, infected individuals will infect neighboring susceptible individuals according to either homogenous or heterogenous transmission, where heterogenous transmission models super-spreaders. In this case, k is described as the percentage of super-spreaders in the population and the differing transmission rates for super-spreaders and non super-spreaders. Infected individuals either recover, at which point they become resistant to infection, or die. Testing regimes cause discovered infected individuals to quarantine for a period of time.

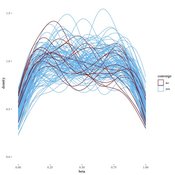

Peer reviewed Modern Wage Dynamics

J M Applegate | Published Sunday, June 05, 2022The Modern Wage Dynamics Model is a generative model of coupled economic production and allocation systems. Each simulation describes a series of interactions between a single aggregate firm and a set of households through both labour and goods markets. The firm produces a representative consumption good using labour provided by the households, who in turn purchase these goods as desired using wages earned, thus the coupling.

Each model iteration the firm decides wage, price and labour hours requested. Given price and wage, households decide hours worked based on their utility function for leisure and consumption. A labour market construct chooses the minimum of hours required and aggregate hours supplied. The firm then uses these inputs to produce goods. Given the hours actually worked, the households decide actual consumption and a market chooses the minimum of goods supplied and aggregate demand. The firm uses information gained through observing market transactions about consumption demand to refine their conceptions of the population’s demand.

The purpose of this model is to explore the general behaviour of an economy with coupled production and allocation systems, as well as to explore the effects of various policies on wage and production, such as minimum wage, tax credits, unemployment benefits, and universal income.

…

Peer reviewed Emergent Firms Model

J M Applegate | Published Friday, July 13, 2018The Emergent Firm (EF) model is based on the premise that firms arise out of individuals choosing to work together to advantage themselves of the benefits of returns-to-scale and coordination. The Emergent Firm (EF) model is a new implementation and extension of Rob Axtell’s Endogenous Dynamics of Multi-Agent Firms model. Like the Axtell model, the EF model describes how economies, composed of firms, form and evolve out of the utility maximizing activity on the part of individual agents. The EF model includes a cash-in-advance constraint on agents changing employment, as well as a universal credit-creating lender to explore how costs and access to capital affect the emergent economy and its macroeconomic characteristics such as firm size distributions, wealth, debt, wages and productivity.

PFS - Preference Falsification Simulation (PreFalSim)

Francisco J Miguel Francisco J. León-Medina Jordi Tena-Sanchez | Published Monday, July 01, 2019A model for simulating the evolution of individual’s preferences, incliding adaptive agents “falsifying” -as public opinions- their own preferences. It was builded to describe, explore, experiment and understand how simple heuristics can modulate global opinion dynamics. So far two mechanisms are implemented: a version of Festiguer’s reduction of cognitive disonance, and a version of Goffman’s impression management. In certain social contexts -minority, social rank presure- some models agents can “fake” its public opinion while keeping internally the oposite preference, but after a number of rounds following this falsifying behaviour pattern, a coherence principle can change the real or internal preferences close to that expressed in public.

The Informational Assumptions of Schelling Segregation: An Agent-Based Decomposition of Cue Inference, Cultural Schemas, and Residential Sorting

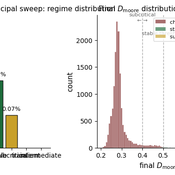

Eric Gladstone | Published Wednesday, May 13, 2026This computational model accompanies the article “The Informational Assumptions of Schelling Segregation: An Agent-Based Decomposition of Cue Inference, Cultural Schemas, and Residential Sorting.” It implements an agent-based model in which agents infer latent neighborhood-type classes from noisy non-demographic cues through schema-specific diagnostic mappings, update beliefs, and relocate when satisfaction on a preferred latent class falls below a threshold.

The model serves as a mechanism-isolation device for studying the informational architecture underlying Schelling-style residential sorting. It includes the principal sweep configuration (14,400 runs across a seven-parameter grid), a disagreement-metric sub-sweep with permutation-minimized Jensen-Shannon divergence recorded natively, controls (positive, negative, and frozen-belief), a paired-seed cue-channel perturbation experiment, and selected-cell sensitivity sweeps for cue persistence and home-biased mobility.

The full ODD protocol, parameter manifests, deterministic seed schedules, processed outputs, regenerable figure scripts, the verification test suite, and the satisfaction-mapping audit document are included. Every reported run is deterministic given a (config, seed) pair, and an included audit script verifies bit-for-bit replay on sampled runs.

Displaying 10 of 1134 results for "J A Cuesta" clear search