About the CoMSES Model Library more info

Our mission is to help computational modelers develop, document, and share their computational models in accordance with community standards and good open science and software engineering practices. Model authors can publish their model source code in the Computational Model Library with narrative documentation as well as metadata that supports open science and emerging norms that facilitate software citation, computational reproducibility / frictionless reuse, and interoperability. Model authors can also request private peer review of their computational models. Models that pass peer review receive a DOI once published.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and feel free to contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 7 of 7 results reviewing clear search

Peer reviewed NoD-Neg: A Non-Deterministic model of affordable housing Negotiations

Aya Badawy Nuno Pinto Richard Kingston | Published Sunday, September 08, 2024The Non-Deterministic model of affordable housing Negotiations (NoD-Neg) is designed for generating hypotheses about the possible outcomes of negotiating affordable housing obligations in new developments in England. By outcomes we mean, the probabilities of failing the negotiation and/or the different possibilities of agreement.

The model focuses on two negotiations which are key in the provision of affordable housing. The first is between a developer (DEV) who is submitting a planning application for approval and the relevant Local Planning Authority (LPA) who is responsible for reviewing the application and enforcing the affordable housing obligations. The second negotiation is between the developer and a Registered Social Landlord (RSL) who buys the affordable units from the developer and rents them out. They can negotiate the price of selling the affordable units to the RSL.

The model runs the two negotiations on the same development project several times to enable agents representing stakeholders to apply different negotiation tactics (different agendas and concession-making tactics), hence, explore the different possibilities of outcomes.

The model produces three types of outputs: (i) histograms showing the distribution of the negotiation outcomes in all the simulation runs and the probability of each outcome; (ii) a data file with the exact values shown in the histograms; and (iii) a conversation log detailing the exchange of messages between agents in each simulation run.

Open Peer Review Model

Federico Bianchi | Published Monday, May 24, 2021This is an agent-based model of a population of scientists alternatively authoring or reviewing manuscripts submitted to a scholarly journal for peer review. Peer-review evaluation can be either ‘confidential’, i.e. the identity of authors and reviewers is not disclosed, or ‘open’, i.e. authors’ identity is disclosed to reviewers. The quality of the submitted manuscripts vary according to their authors’ resources, which vary according to the number of publications. Reviewers can assess the assigned manuscript’s quality either reliably of unreliably according to varying behavioural assumptions, i.e. direct/indirect reciprocation of past outcome as authors, or deference towards higher-status authors.

The PRIF Model

Davide Secchi | Published Friday, November 08, 2019This model takes into consideration Peer Reviewing under the influence of Impact Factor (PRIF) and it has the purpose to explore whether the infamous metric affects assessment of papers under review. The idea is to consider to types of reviewers, those who are agnostic towards IF (IU1) and those that believe that it is a measure of journal (and article) quality (IU2). This perception is somehow reflected in the evaluation, because the perceived scientific value of a paper becomes a function of the journal in which an article has been submitted. Various mechanisms to update reviewer preferences are also implemented.

Peer Review Game

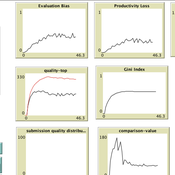

Giangiacomo Bravo Flaminio Squazzoni Francisco Grimaldo Federico Bianchi | Published Monday, April 30, 2018NetLogo software for the Peer Review Game model. It represents a population of scientists endowed with a proportion of a fixed pool of resources. At each step scientists decide how to allocate their resources between submitting manuscripts and reviewing others’ submissions. Quality of submissions and reviews depend on the amount of allocated resources and biased perception of submissions’ quality. Scientists can behave according to different allocation strategies by simply reacting to the outcome of their previous submission process or comparing their outcome with published papers’ quality. Overall bias of selected submissions and quality of published papers are computed at each step.

Peer Review with Multiple Reviewers

Flaminio Squazzoni Federico Bianchi | Published Thursday, September 10, 2015This ABM looks at the effect of multiple reviewers and their behavior on the quality and efficiency of peer review. It models a community of scientists who alternatively act as “author” or “reviewer” at each turn.

PR-M: The Peer Review Model

Mario Paolucci Francisco Grimaldo | Published Sunday, November 10, 2013 | Last modified Wednesday, July 01, 2015This is an agent-based model of peer review built on the following three entities: papers, scientists and conferences. The model has been implemented on a BDI platform (Jason) that allows to perform both parameter and mechanism exploration.

Peer Review Model

Flaminio Squazzoni Claudio Gandelli | Published Wednesday, September 05, 2012 | Last modified Saturday, April 27, 2013This model looks at implications of author/referee interaction for quality and efficiency of peer review. It allows to investigate the importance of various reciprocity motives to ensure cooperation. Peer review is modelled as a process based on knowledge asymmetries and subject to evaluation bias. The model includes various simulation scenarios to test different interaction conditions and author and referee behaviour and various indexes that measure quality and efficiency of evaluation […]