About the CoMSES Model Library more info

Our mission is to help computational modelers develop, document, and share their computational models in accordance with community standards and good open science and software engineering practices. Model authors can publish their model source code in the Computational Model Library with narrative documentation as well as metadata that supports open science and emerging norms that facilitate software citation, computational reproducibility / frictionless reuse, and interoperability. Model authors can also request private peer review of their computational models. Models that pass peer review receive a DOI once published.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and feel free to contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 4 of 4 results misinformation clear search

Peer reviewed A dynamic identity model for misinformation in social networks

emdhar | Published Friday, February 27, 2026A dynamic identity model for misinformation in social networks, an agent-based model of social identity and misinformation dynamics.

I developed this model as a part of my master’s thesis, “Does social identity drive belief and persistence in online misinformation? An agent-based modelling approach” at University College Dublin, Ireland (2024-2025).

The purpose of this model is to further understand the dynamics of misinformation sharing as an expression of social identity. I introduce a framework to understand the influence of self-categorisation on misinformation persistence in social network. It integrates a social learning model with the Dynamic Identity Model for Agents (DIMA) using simple logic to simulate the social trade-offs driving misinformation and observe the effects on misinformation spread.

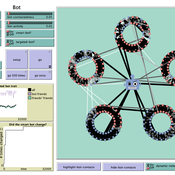

How do bots influence beliefs on social media? Why do beliefs propagated by social bots spread far and wide, yet does their direct influence appear to be limited?

This model extends Axelrod’s model for the dissemination of culture (1997), with a social bot agent–an agent who only sends information and cannot be influenced themselves. The basic network is a ring network with N agents connected to k nearest neighbors. The agents have a cultural profile with F features and Q traits per feature. When two agents interact, the sending agent sends the trait of a randomly chosen feature to the receiving agent, who adopts this trait with a probability equal to their similarity. To this network, we add a bot agents who is given a unique trait on the first feature and is connected to a proportion of the agents in the model equal to ‘bot-connectedness’. At each timestep, the bot is chosen to spread one of its traits to its neighbors with a probility equal to ‘bot-activity’.

The main finding in this model is that, generally, bot activity and bot connectedness are both negatively related to the success of the bot in spreading its unique message, in equilibrium. The mechanism is that very active and well connected bots quickly influence their direct contacts, who then grow too dissimilar from the bot’s indirect contacts to quickly, preventing indirect influence. A less active and less connected bot leaves more space for indirect influence to occur, and is therefore more successful in the long run.

Controlling the misinformation diffusion in social media by the effect of different classes of agents

Ali Khodabandeh Yalabadi | Published Thursday, October 05, 2023An agent-based framework to simulate the diffusion process of a piece of misinformation according to the SBFC model in which the fake news and its debunking compete in a social network. Considering new classes of agents, this model is closer to reality and proposed different strategies how to mitigate and control misinformation.

Information Spread

Aaron Beck | Published Thursday, December 02, 2021Our model shows how disinformation spreads on a random network of individuals. The network is weighted and directed. We are looking at how different factors affect how fast, or how many people get “infected” with the misinformation. One of the main factors that we were curious about was perceived trustworthiness. This is because we want to see if people of power, or a high degree of perceived trustworthiness, were able to push misinformation to more people and convert more people to believe the information.